Disk Pools

Explore this Page

- About Disk Pools

- Storage Allocation Units (SAUs)

- Tiers and Tier Affinity

- Storage Allocation

- Tier Rebalancing

- Reserved Space

- Auto-Reclamation

- Extending the Size of Pool Disks

- Disk Pools

- Additional Features

- Pool Usage

About Disk Pools

This software enables administrators to centrally manage pooled storage devices. Pools can also eliminate the need for administrators to monitor usage on each host to determine when they are likely to run out of disk space and avoid conventional disk resizing outages. Disk pools allow your physical storage resources to be pooled and then dynamically allocated as needed. When a pool nears depletion, additional storage devices can be added to the pool non-disruptively and capacity growth is transparent to the host.

Disk pools can be populated with JBOD (just a bunch of disks) enclosures to intelligent storage arrays. Any number of disk pools can be created.

This software uses the binary calculation for capacity. For example, 1 TB = 240 B = 1024 GB.

Requirements

All physical disks added to pools must be:

- Any type of disk recognized by the Windows operating system

- Initialized GPT or uninitialized (This software will initialize GPT when the disks are added to pools.)

- Free of partitions

- No larger than 1 PB

- The minimum size of a pool disk should be 2 GB.

- Hard disks may have a sector size of 512 bytes or 4 kilobytes (4K Advanced Format), but hard disks with different sector sizes cannot be added to the same pool. See 4 KB Sector Support for more information and comparisons between sector sizes.

- When using Capacity Optimization (Inline Deduplication and Compression), DataCore recommends at least two physical disks per pool.

Disks marked as "Removable" (such as USB drives) in Windows Disk Management cannot be used in pools.

Storage Allocation Units (SAUs)

When virtual disks are created from pools, DataCore SANsymphony software carves units of storage out of available pool resources. These units of storage, called storage allocation units (SAUs), have a size that is predetermined by the user when the pools are created. SAUs can range in size from 4 MB to 1024 MB. The default size is 1024 MB and this size is optimal for most standard applications. All SAUs from a pool will be the same size.

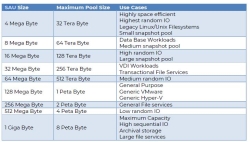

The SAU size must be provided when the pool is created and cannot be changed. The table below provides guidance on the SAU size selection based on the maximum pool size and the associated use cases:

The size of a virtual disk created from a pool must be a multiple of the SAU size. When a virtual disk is created, the amount allocated from the pool is thinly provisioned and consists of one SAU. When the virtual disk is served, the host will discover a disk based on the user-defined logical size. The maximum logical size of a virtual disk is 1024 TB (or 1 PB),

Virtual disks are dynamically-allocated. After a virtual disk is served to the host, and the host fills the SAU with data, another SAU is automatically and transparently allocated for use by the virtual disk. Additional SAUs continue to be allocated "as needed". So even though the host sees a large disk, only a portion of the logical size may actually be allocated from the pool. This "as needed" allocation is also referred to as "thin provisioning."

SAU size may not effect the maximum size of a deduplication pool, which has a fixed maximum size. See Deduplication.

Reclamation

Reclamation is the process of writing zeros to physical disks in pools. The reclamation process prepares physical disks in pools to ensure that when virtual disks are created, the SAUs allocated are clean and free of old data. After a disk is added to a pool, the SAUs created from the disk first go into reclamation. As each SAU goes through the reclamation process, that SAU is made available to the pool and is counted as free space. See Pool Usage below for the possible states of SAUs.

Reclamation can take some time and progress can be monitored in the Info tab on Disk Pool Details page. SAUs in reclamation cannot be used to create virtual disks. (Virtual disks require sufficient free space in order to be created.)

Reclamation occurs when:

- Physical disks are initially added to pools

- A previously-used virtual disk is deleted (which causes the SAUs to be returned to the pool).

- Auto-reclamation occurs to automatically reclaim SAUs that are "not in use" or "zeroed-out". See Auto-Reclamation below for details.

- The manual reclamation operation is performed. See Reclaiming Unused Virtual Disk Space.

Tiers and Tier Affinity

Disk pools are comprised of tiers which are used to implement the Automated Storage Tiering (auto-tiering) and storage profile schemes. The tier feature is only used for auto-tiering or when storage profiles are used to prioritize virtual disk data.

The number of tiers in a pool is assigned by the user when it is created, but can be changed later if necessary. Each disk pool can have from one to 15 tiers; the default number of tiers is three. There should be a significant difference in disk performance between tiers. Three tiers is optimal for most applications.

When physical disks are added to a pool, by default, they are assigned to a mid-range tier which falls in the middle of the top and bottom tiers. To take advantage of disk performance, the disks should be grouped by speed and performance and assigned to the appropriate tier. For instance, if a pool has the default number of tiers, Tier 1 should consist of the fastest physical disks in the pool and Tier 3 should consist of the slowest physical disks.

Tier Affinity

When a virtual disk is created, the user assigns a storage profile to it. The storage profile contains a performance class setting which designates the priority of the virtual disk data. The default setting is Normal, but that setting can be changed in order to assign a higher or lower data priority to the virtual disk. When virtual disks are assigned storage profiles based on priority, and pool disks are assigned to tiers based on performance, this results in a correlation between virtual disk priority and disk performance. Virtual disks with higher priority will be allocated from disks with better performance, and virtual disks with lower priority will be allocated from disks with worse performance. This is the storage profile scheme.

When storage is allocated for a virtual disk, SAUs are allocated from tiers based on the priority of the virtual disk. In order to determine the tiers to use, a "tier affinity" is calculated based on the storage profile and the maximum number of tiers in the disk pool. The tier affinity defines the preferred tiers to use when storing data for that particular virtual disk. SAUs for a virtual disk will reside within the tier affinity unless space is unavailable.

A system health monitor will monitor the tier affinity of SAUs in each pool and will issue a System Health message if more than 1% of the SAUs are out of affinity.

Mirrored virtual disks have a tier affinity for each storage source in the virtual disk.

Virtual disks created from pass-through disks do not have a tier affinity.

The tier affinity for a virtual disk can be viewed in the Info tab of the Virtual Disk Details page.

The use of storage profiles and the tier feature as illustrated above is optional. All settings will use the default values unless specifically changed. When default values are used for all settings, the number of tiers in each pool will be three, all physical disks added to the pool will be assigned to Tier 2, and virtual disk storage profiles will be Normal, which will set the tier affinity to allow the use of all tiers in the pool.

- Tiers are only used for auto-tiering and storage profile schemes. If auto-tiering is not enabled or storage profiles are not used to prioritize data, default settings for tier numbers or physical disk assignments to tiers should not be changed.

- Automated Storage Tiering must be enabled for data migration to occur. If space is unavailable in the tier affinity, storage allocation for a virtual disk must be made from tiers that are out of the tier affinity and that data will never be migrated back into the tier affinity unless Automated Storage Tiering is enabled.

- Data migration used in the auto-tiering feature will affect disk pool performance; so there should be a significant difference in disk performance between tiers in order to justify the migration of data between the tiers. See Automated Storage Tiering for more information.

- Storage profiles are a standard feature and virtual disks can be assigned different storage profiles without a license for Automated Storage Tiering. If non-default storage profiles are assigned to virtual disks, users must always ensure that sufficient physical disk space exists in tiers to accommodate the storage profiles assigned. See Storage Profiles for more information.

Storage Allocation

When a virtual disk is created, a storage profile is assigned to it. The storage profile contains a performance class setting which designates the priority of the virtual disk data. SAUs for a virtual disk are allocated to tiers based on the priority of the virtual disk. A "tier affinity" is calculated based on the virtual disk priority and the maximum number of tiers in the disk pool. The tier affinity defines the preferred tiers to use when storing data for that particular virtual disk. See Storage Profiles for more information.

SAUs are allocated in a round-robin fashion between physical disks in the highest available tier in the tier affinity set of the virtual disk until the tier is fully allocated. When that tier becomes fully allocated, storage allocation for a virtual disk will continue in the next highest available tier in the tier affinity of the virtual disk. If space is unavailable in the tier affinity set, data will be stored on another available tier.

Allocation can be forced out of the tier affinity due to insufficient free space in preferred tiers. When this happens, the data will never be migrated back into the tier affinity set unless Automated Storage Tiering is enabled.

A percentage of space in each disk tier of a pool can be preserved for future virtual disk allocations for use with the auto-tiering feature, see Automated Storage Tiering for more information.

Also see Pool Allocation Tool.

Tier Rebalancing

Tier rebalancing (also known as pool rebalancing) is the process of redistributing SAUs equally across all physical disks within the same tier; thereby helping to improve performance by distributing I/O requests for virtual disks over multiple physical disks.

The rebalancing scheme takes into account the total number of SAUs allocated to each physical disk in a tier and, periodically, will move them around so that each physical disk has the same number of SAUs allocated to it. Since the SAUs are distributed evenly, smaller sized physical disks will fill up with before larger physical disks in the same tier. For example, if a tier has two physical disks with a total allocation of 100 SAUs, the goal of rebalancing is for 50 SAUs to be allocated to each physical disk. But, if one disks reaches full capacity after 25 SAUs has been allocated to it, then the remaining disk will contain 75 SAUs.

Tier rebalancing does not distribute SAUs using I/O access patterns, nor does it guarantee that SAUs for each Virtual Disk are also equally distributed. SAUs that are allocated towards the 'end' of a physical disk are moved first.. Tier rebalancing is a standard feature and does not require an Automated Storage Tiering license.

Rebalancing will occur under the following conditions:

- A physical disk is added to a disk pool. Storage allocation is rebalanced to include the new physical disk.

- A physical disk is decommissioned from a pool. Storage allocation is rebalanced among the remaining disks in the tier in order to remove the disk. See Removing Physical Disks from Pools.

- The disk tier number of a physical disk is changed. Storage allocation is rebalanced in the original tier and the new tier.

- A migration or new allocation leads to an unbalanced distribution among disks in a tier.

- If the disk tier has never been rebalanced before.

Reserved Space

Space can be reserved in pools for exclusive use by virtual disks when virtual disks are created or after creation in the virtual disk settings. Space is reserved per virtual disk and unavailable for use by other virtual disks created from the same pool. The reserved space feature speeds up mirror recoveries for the virtual disk.

Reserved pool space is not pre-allocated at the time it is reserved, but will be dynamically allocated as needed by the virtual disk. Any reserved space is allocated first until all reserved space for the virtual disk has been allocated, then allocation is made from the general free space of the pool.

The reserved space of a virtual disk can range from the size of an SAU up to the entire size of the virtual disk. The total reserved space in a pool can be viewed in the Disk Pool Details page>Info tab.

Reserving space in the pool does reduce the amount of free space in the pool. In order to reserve space in the pool for a virtual disk, there must be enough free space to accommodate the reserved space. (Space in reclamation is not considered free space.) Use caution when reserving space to ensure there is sufficient storage available for other virtual disks in the pool.

Previously set reserved space can be unallocated at any time by setting it to zero. Any unused reserved space will be returned to the pool as free space.

Also see Automated Storage Tiering for information on preserving a percentage of tier space in pools for new allocations. This auto-tiering feature preserves tier space in a pool, but not for any specific virtual disk.

Auto-Reclamation

Auto-reclamation is the process of automatically reclaiming SAUs that are "not in use" or "zeroed-out" and returning them to the pool without user intervention. Auto-reclamation is executed in memory without issuing I/O reads to the disk and should not impact performance. Auto-reclamation runs with priority over the manual reclamation operation.

- Auto-reclamation will occur under these conditions:

- When a host application deletes large amounts of virtual disk data, the host operating system may issue a SCSI unmap command to the DataCore Server indicating that the data is no longer needed. A SCSI unmap of the SAUs flagged as "not in use" will be performed on the virtual disk. Auto-reclamation based on a SCSI unmap command is supported for Windows Server 2012 and ESXi hosts.

- Auto-reclamation will also be performed on single and mirrored virtual disks when a "zero the free space" command, such as SDelete, is issued on the host. The entire SAU must be zeroed-out.

- In addition, all other cases where "zeroed-out" SAUs exist will be reclaimed in time when the SAUs are migrated such as with Automated Storage Tiering or pool rebalancing. (This case will occur at a slower rate that the first two cases.

- Auto-reclamation is enabled and cannot be disabled.

- Auto-reclamation is not supported for shared disk pools. Use the manual reclamation operation to reclaim virtual disk space in this case. See Reclaiming Unused Virtual Disk Space.

- Mirrored virtual disks can have different allocation on both sides of the mirror due to tier differences in pools on different servers and other factors. If the allocation is very different, the manual reclamation operation may be run to equalize the space allocation instead of waiting for auto-reclamation to occur which may require SAU migration. See Reclaiming Unused Virtual Disk Space.

- If Continuous Data Protection is enabled for a virtual disk, SAUs cannot be auto-reclaimed until the write operations from hosts have been destaged from the history log to disk.

- Auto-reclamation on failed mirrored virtual disks will stop and be restarted again when both sides are online and recovery finishes.

- When this automatic operation is in progress the status of "Auto-reclamation in progress" will be displayed in the Virtual Disk Details page>Info tab or in the Virtual Disks list>Virtual Disk Summary next to the storage source.

- By default, the Microsoft Windows 2016, 2012, 2019, 2022, or VMware ESXi hosts will have SCSI UNMAP support enabled. In some cases, this feature can cause the following:

- Disk format operations on the host may take longer than expected.

- IO load to back-end storage on the DataCore Server may increase.

- Retention times for the history log of CDP-enabled virtual disks (vDisks) may drop suddenly.

See DataCore FAQ 1544 for more details.

Extending the Size of Pool Disks

We recommend that once a physical disk is added to a disk pool that it is not extended or resized later.

When physical disks that exist in disk pools are subsequently extended or the size increased (such as by using RAID functions in an attached intelligent storage array), DataCore SANsymphony software does not have the ability to automatically recognize and use the additional space as Storage Allocation Units (SAUs) are created when the disk is originally added to the pool. However, the new size of the pool disk will be detected and count toward the Storage Capacity License limit.

There are two methods to allow DataCore SANsymphony software to recognize and utilize a pool disk that has been extended or the size increased in a storage array. These methods can also be used to replace existing pool disks with larger disks not necessarily in a storage array.

- Adding a larger disk to the pool and decommissioning the original smaller disk. This process copies the allocated SAUs from the disk being removed to other physical disks in the pool. See Decommissioning Physical Disks for more information.

- Mirroring the original pool disk with a new larger physical disk in the same pool. (The new disk should be at least the same size as the extended or increased size of the original disk.) When the pool mirror disks are completely synchronized, the original disk should be removed from the mirror. Then the software will recognize and use the new larger size in the pool. See Mirroring Pool Disks for information on pool mirrors and Removing a Pool Disk Mirror to break the mirror after synchronization is complete.

The disk pool mirroring method is usually quicker and more convenient than adding a larger physical disk to the pool and decommissioning the original disk.

Additional Features

Additional features to aid in administration and fault tolerance:

- Auto-tiering is the process of identifying read and write access patterns for blocks of data (SAUs) in the pool and either promoting or demoting the SAUs to a higher or lower tier based on that access. The most heavily-accessed SAUs will be promoted to higher tiers and the least-accessed SAUs will be demoted to lesser tiers. Automated Storage Tiering is a licensed feature. See Automated Storage Tiering and Storage Profiles.

- Disk pools can be "shared" between two or more DataCore Servers in the same server group. Disk pools are considered "shared" when they contain at least one physical disk which is seen by two or more servers. See Shared Multi-port Array Support.

- Deduplication is a technique for reducing allocated storage space by eliminating duplicate blocks of repeating data in virtual disks. The same block of repeating data in a virtual disk can be stored once but referenced repeatedly from multiple locations where it is used. See Deduplication.

- The Allocation View tool provides different views of the allocation for disk pools. It displays the allocation and storage temperature per physical disk and also per virtual disk in a pool. See Pool Allocation Tool.

- Pools have two threshold features that can be customized for each pool. The Available Space Threshold feature issues an alert when free space is getting low and more disks need to be added to pools. The I/O Latency Threshold feature issues an alert when I/O is lagging so that the issue may be diagnosed. See System Health Thresholds.

- Disks in pools can be mirrored for fault tolerance. The sector sizes must be the same. See Mirroring Pool Disks.

- Active physical disks can be removed non-disruptively from a pool by transparently redistributing the data among the remaining disks in the pool. See Removing Physical Disks from Pools.

- Space can be reserved in pools for exclusive use by virtual disks when they are created or later in the virtual disk settings. The space is still dynamically allocated as needed.

- Virtual disk space (SAUs) in disk pools can be reclaimed from virtual disks after files have been deleted by hosts. The unused SAUs are returned to the pool. See Reclaiming Virtual Disk Space in Pools.

Pool Usage

The physical space in a pool (SAUs) will be classified according to the current state in order to monitor pool usage. After physical disks are added to pools and go through the reclamation process, the states of SAUs in pools will fluctuate depending on the creation, use and deletion of virtual disks. An SAU can only be in one state at a time. Pool usage is graphically displayed by a pie chart at the top of the Disk Pool Details page. (Also see the Allocation View tab which displays the temperature of allocated SAUs per physical disk and per virtual disk in a pool.)

Possible States of SAUs in a Pool

Learn More