Proxmox: Host Configuration Guide

This topic includes:

Serving a SANsymphony Virtual Disk to the Proxmox Node

Overview

This guide provides information on the configuration settings and considerations for hosts that are running Proxmox with DataCoreSANsymphony.

The basic installation of the nodes must be carried out according to the Proxmox specifications. Refer to the Proxmox installation guide for installation.

Through this guide, the Proxmox host will be referenced as “PVE”, as this is the name Proxmox references in its documents.

Version

The guide applies to the following software versions:

- SANsymphony 10.0 PSP18

- Windows Standard Server 2022

- Proxmox version 8.2.2

Configuring the Network

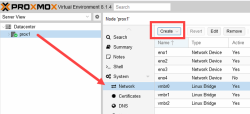

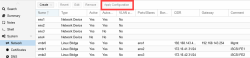

The networks or network configuration is performed in the Proxmox Host (PVE) Graphic User Interface (GUI) under Host > Network.

For each Network Interface Card (NIC) that is required for the SANsymphony iSCSI, a "Linux Bridge" vNIC (2 x FrontEnd (FE) with static IP) must be created via the Create button.

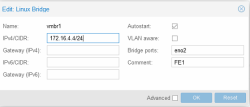

- Name: Enter an applicable name. For Example: vmbr[N], where 0 ≤ N ≤ 4094.

- IPv4/CIDR: Enter the IP address / Subnet maske.

- Autostart: Check the box to enable this option.

- Bridge ports: Enter the NIC to be used.

- Comment: It is recommended that a function name is entered here, as this makes it easier to administer the environment.

- Advanced: Select an MTU size to support the Jumbo Frames. The Add the Comment field is displayed to specify the function of the Linux Bridge.

- OK: Select OK to apply the configuration settings.

- Reset: Click this option to clear the existing options selected/ details entered, and to update different details.

The configuration settings applied only become active after clicking the "Apply Configuration" button.

Creating a Cluster

The following steps to create a cluster do not include the information on the quorum device (qdevice) and High Availability (HA) configuration. Refer to the Proxmox Cluster Manager document for detailed information on the qdevice and HA configuration.

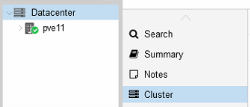

- Under Datacenter > Cluster, click Create Cluster.

- Enter the cluster name.

- Select a network connection from the drop-down list to serve as the main cluster network (Link 0). The network connection defaults to the IP address resolved via the node’s hostname.

Adding a Node

- Log in to the GUI on an existing cluster node.

- Under Datacenter > Cluster, click the Join Information button displayed at the top.

- Click the Copy Information button. Alternatively, copy the string from the Information field.

- Next, log in to the GUI on the applicable node to be added.

- Under Datacenter > Cluster, click Join Cluster.

- Fill the Information field with the Join Information text copied earlier. Most settings required for joining the cluster will be filled out automatically.

- For security reasons, enter the cluster password manually.

- Click Join.

The node is added.

Proxmox iSCSI

Installing iSCSI Daemon

iSCSI is a widely employed technology that is used to connect to storage servers. Almost all storage vendors support iSCSI. There are also open-source iSCSI target solutions available, which is based on Debian.

To use Debian, install the Open-iSCSI (open-iscsi) package. This is a standard Debian package, however, not installed by default to save the resources.

apt-get install open-iscsi

iSCSI Connection

Changing the iSCSI-Initiator Name on each PVE

The Initiator Name must be unique for each iSCSI initiator. Do NOT duplicate the iSCSI-Initiator Names.

- Edit the iSCSI initiator name in the /etc/iscsi/initiatorname.iscsi file to assign a unique name in a way that the IQN refers to the server and the function. This change makes administration and troubleshooting easier.

- Restart iSCSI to take effect using the following command:

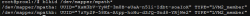

Original: InitiatorName=iqn.1993-08.org.debian:01:bb88f6a25285

Modified: InitiatorName=iqn.1993-08.org.debian:01:<Servername + No.>

systemctl restart iscsid

Discovering the iSCSI Target to PVE

- Before attaching the SANsymphony iSCSI target, discover all the iqn-portname using the following command:

- Attach all the SANsymphony iSCSI targets on each PVE using the following command:

iscsiadm -m discovery -t sendtargets -p <IP-address>:3260

For Example: # iscsiadm -m discovery -t sendtargets -p 172.16.41.21:3260

iscsiadm --mode node --targetname <IQN> -p <IP-address> --login

Refer to the Proxmox ISCSI Installation document for more information.

- iscsiadm --mode node --targetname iqn.2000-08.com.datacore:pve-sds11-fe1 -p: 172.16.41.21 --login

- iscsiadm --mode node --targetname iqn.2000-08.com.datacore:pve-sds11-fe2 -p 172.16.42.21 --login

- iscsiadm --mode node --targetname iqn.2000-08.com.datacore:pve-sds10-fe1 -p 172.16.41.20 --login

- iscsiadm --mode node --targetname iqn.2000-08.com.datacore:pve-sds10-fe2 -p 172.16.42.20 --login

- Restart the iSCSI session using the following command:

- Rescanning session [sid: 1, target: iqn.2000-08.com.datacore:pve-sds11-fe1, portal: 172.16.41.21,3260]

- Rescanning session [sid: 4, target: iqn.2000-08.com.datacore:pve-sds11-fe2, portal: 172.16.42.21,3260]

- Rescanning session [sid: 2, target: iqn.2000-08.com.datacore:pve-sds10-fe1, portal: 172.16.41.20,3260]

- Rescanning session [sid: 3, target: iqn.2000-08.com.datacore:pve-sds10-fe2, portal: 172.16.42.20,3260]

For Example:

iscsiadm -m session –rescan

For Example:

Displaying the Active Session

Use the following command to display the active session:

iscsiadm --mode session --print=1

iSCSI Settings

The iSCSI service does not start automatically by default when the PVE-node boots. Refer to the iSCSI Multipath document for more information.

The /etc/iscsi/iscsid.conf file must change the line so that the initiator starts automatically.

The default 'node.session.timeo.replacement_timeout' is 120 seconds. It is recommended to use a smaller value of 15 seconds instead.

If a port reinitialize is done, it can be that the port is unable to login on its own. In this case, the attempts must be increased here:

Restart the service using the following command:

systemctl start iscsid

Logging in to the iSCSI Targets on Reboot

For each connected iSCSI target, modify the node.startup parameter in the target to automatic. The target is specified in the /etc/iscsi/nodes/<TARGET>/<PORTAL>/default file.

SCSI Disk Timeout

Set the timeout to 80 seconds for all the SCSI devices created from the SANsymphony virtual disks.

For Example: cat /sys/block/sda/device/timeout

There are two methods that can be used to change the SCSI disk timeout for a given device.

- Use the ‘echo’ command – this is temporary and will not survive the next reboot of the Linux host server.

- Create a custom ‘udev rule’ – this is permanent but will require a reboot for the setting to take effect.

Using the ‘echo’ Command (will not survive a reboot)

Set the SCSI Disk timeout value to 80 seconds using the following command:

echo 80 > /sys/block/[disk_device]/device/timeout

Creating a Custom ‘udev’ Rule

Create a file called /etc/udev/rules.d/99-datacore.rules with the following settings:

- Ensure that the udev rule is exactly as written above. If not, this may result in the Linux operating system defaulting back to 30 seconds.

- There are four blank whitespace characters after the ATTRS {model} string which must be observed. If not, paths to SANsymphony virtual disks may not be discovered.

Refer to the Linux Host Configuration Guide for more information.

iSCSI Multipath

Installing Multipath Tools

The default installation does not include the 'multipath-tools' package. Use the following commands to install the package:

apt-get update

apt-get install multipath-tools

Refer to the iSCSI Multipath document for more information.

Creating a Multipath.conf File

After installing the package, create the following multipath configuration file: /etc/multipath.conf.

Refer to the DataCore Linux Host Configuration Guide for the relevant settings and the adjustments for the PVE in the iSCSI Multipath document.

defaults {

user_friendly_names yes

polling_interval 60

find_multipaths "smart"

}

blacklist {

devnode "^(ram|raw|loop|fd|md|dm-|sr|scd|st)[0-9]*"

devnode "^hd[a-z]"

}

devices {

device {

vendor "DataCore"

product "Virtual Disk"

path_checker tur

prio alua

failback 10

no_path_retry fail

dev_loss_tmo 60

fast_io_fail_tmo 5

rr_min_io_rq 100

path_grouping_policy group_by_prio

}

}Restarting the Service

Restart the multipath service using the following command to reload the configuration:

systemctl restart multipath-tools

Refer to the ISCSI Multipath document for more information.

Serving a SANsymphony Virtual Disk to the Proxmox Node

Following the necessary configurations in the SANsymphony graphical user interface (GUI) such as the assignment of the iSCSI port to the relevant host and the virtual disk, the virtual disk must now be integrated into the host.

To make the virtual disk visible in the system, the iSCSI connection must be scanned again using the following command:

iscsiadm -m session --rescan

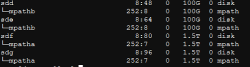

The list of block devices and whether the virtual disk is correctly detected with its paths may be identified and with the multipath name “mpathX” the dives may also identified.

Command:

lsblk

Output:

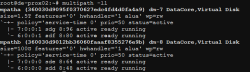

Use the 'multipath' command to determine which and whether all necessary paths are now available from the SANsymphony server for the virtual disk:

Command:

multipath -ll

Output:

Creating a Proxmox File-system

Creating a Physical Volume for the Logical Volume

“pvcreate” initializes the specified physical volume for later use by the Logical Volume Manager (LVM).

Command:

pvcreate /dev/mapper/mpathX

Creating a Volume Group

Command:

vgcreate vol_grp<No> /dev/mapper/mpathX

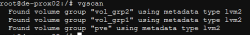

Displaying a Volume Group

Command:

vgscan

Output:

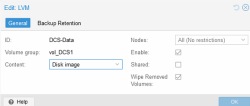

Adding LVM at the Datacenter level

To create a new LVM storage, access the PVE GUI to the datacenter level, then select Storage and enter Add.

Finding out the UUID and the Partition Type

The blkid command is used to query information from the connected storage devices and their partitions.

Command:

blkid /dev/mapper/mpath*

Output:

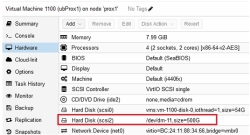

RAW Device Mapping to Virtual Machine

Follow the steps below if the prerequisites of an operating system or an application should be that a RAW device mapping into the virtual machine is necessary:

- After successfully serving the virtual disk (single or mirror) to the Proxmox (PVE) node, run a rescan to make the virtual disk visible in the system using the following command:

iscsiadm -m session --rescan

- Identify the virtual disk to use as a RAW device and identified multipath name “mpathX” using the following command:

lsblk

- Navigate to the “/dev/mapper” directory and run the

ls -lacommand to verify which dm-X the required device is linked to.

Output:

- Hot-Plug/Add physical device as new virtual SCSI disk using the following command:

qm set VM-ID -scsi>No< /dev/dm->No<

For Example:

Restarting the Connection after Rebooting the Proxmox Server

Use the following commands to restart the Proxmox (PVE) Server:

systemctl restart open-iscsi.service

iscsiadm -m node --login

If an error occurs, then attempt the following command first:

iscsiadm -m node –logout

The connection will restart.